EARLY ACCESS OPEN: We’re onboarding pilot partners and a limited investor round for AET-Ψ stability infrastructure

coreXploit

FINDING THE BOUNDARIES OF WHAT IS POSSIBLE

AET-Ψ

OUR FIRST PARADIGM

Stability infrastructure for accelerated AI inference.

AET-Ψ is an inference-time stabilization engine that reduces hidden drift and instance-level divergence in LLM/VLM pipelines—especially under quantization, long context, and aggressive optimization.

Built for measurable KPIs. Designed for few-step refinement.

Proof Strip

Inference-time Stabilization:

A stability layer that acts during inference—when real-world drift actually happens.

Commercially viable iteration budgets designed for production constraints.

We quantify stability with divergence and recovery metrics—not vibes.

Few-step Refinement :

Measurable Outcomes:

“Why Stability Now?”

The next bottleneck isn’t intelligence. It’s stability.

Modern models can be brilliant and still fail unpredictably when conditions change: lower precision, token reduction, long context, noisy inputs, sensor dropouts, or tight latency budgets. Many failures begin as internal trajectory drift—before they show up in outputs. We build the missing layer: stability as a first-class system property.

What is AET-Ψ

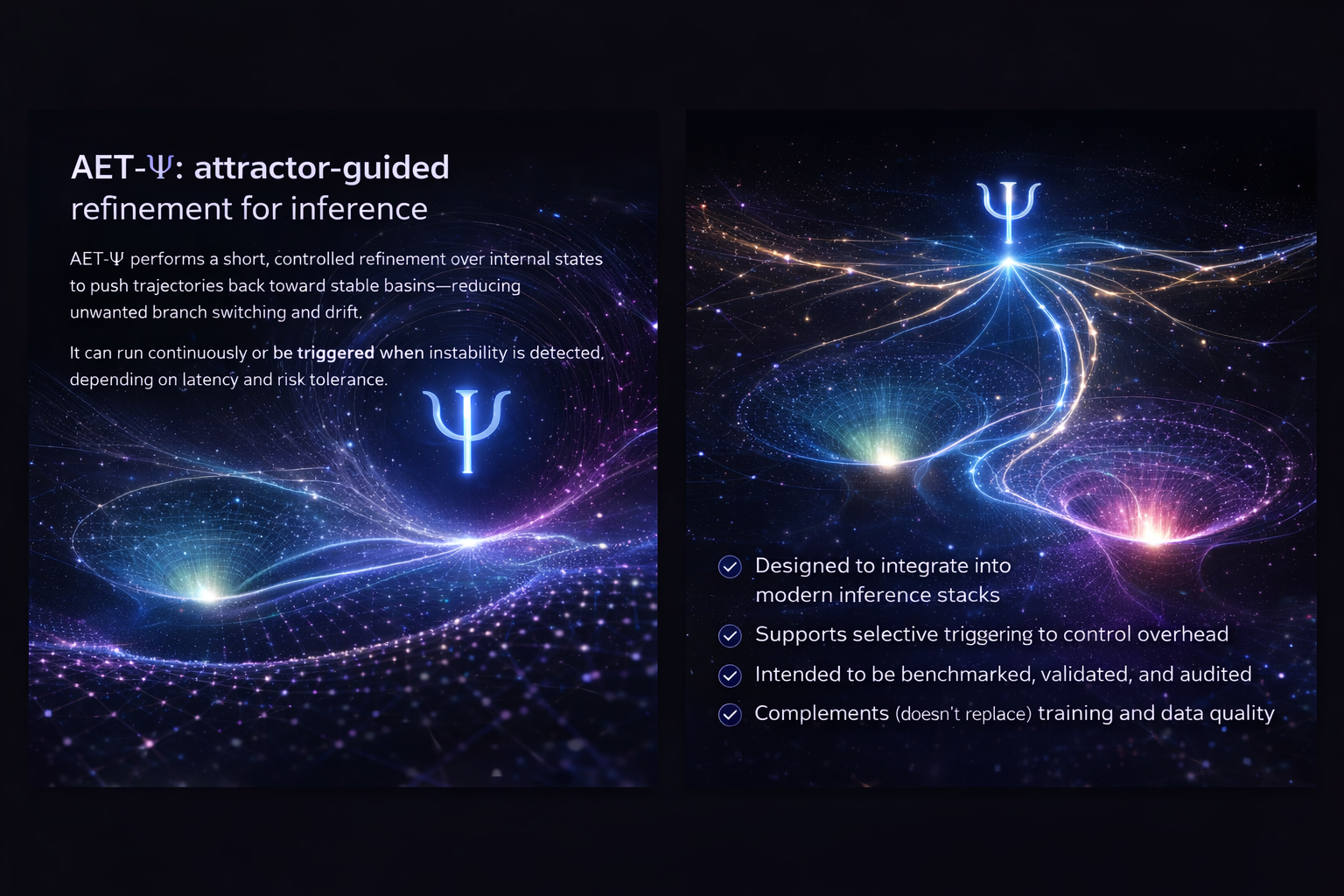

AET-Ψ: attractor-guided refinement for inference.

AET-Ψ is an inference-time stabilization engine that reduces hidden drift and instance-level divergence in LLM/VLM pipelines—especially under quantization, long context, and aggressive optimization.

Designed to integrate into modern inference stacks

Supports selective triggering to control overhead

Intended to be benchmarked, validated, and audited

Complements training and data quality.

AET-Ψ Solutions

Where we deploy stability first.

Accelerated inference stability

Reduce instability introduced by quantization and aggressive decoding optimizations. Focused on measurable divergence and recovery rates.

Refine registration toward correct basins with fewer failure cases caused by local minima and perturbations.

Geometric alignment under noise

Accelerated LLM/VLM inference

Accelerated LLM/VLM inference

Robotics & AR pose refinement

Pose & state refinement

Improve consistency under occlusion and noise. Fewer catastrophic wrong-basin locks in iterative estimation loops.

Industrial alignment

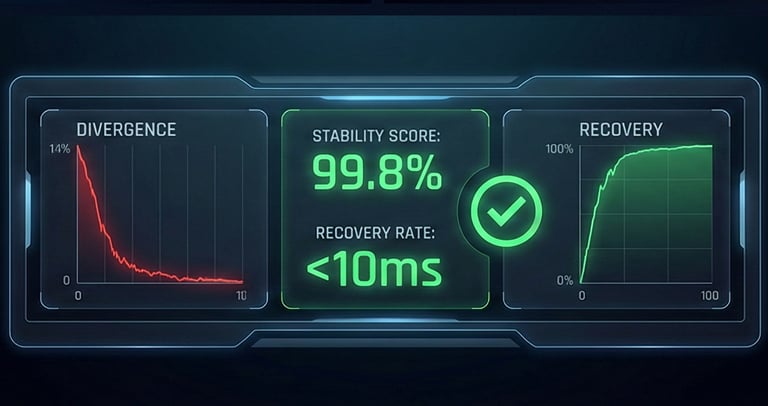

Metrics Preview

Stability you can measure.

Ingeniería Técnica

Accelerated LLM/VLM inference

We evaluate stability with metrics engineering teams can track and optimize:

Divergence Ratio (DR): incidence of instability under accelerated inference

Recovery success rate: how often refinement returns to a correct basin

Long-context degradation slope: stability decay vs context length

Perturbation sensitivity: robustness under controlled noise

How partnerships work (3 steps)

Pilot partnerships, designed for speed and credibility.

Ingeniería Técnica

Accelerated LLM/VLM inference

We evaluate stability with metrics engineering teams can track and optimize:

Step 1: Baseline & instrumentation

Define metrics, triggers, and failure modes in your current pipeline.

Step 2: Integrate & evaluate

Run controlled tests with fixed methodology; measure mean and tail behavior.

Step 3: Decision package

Receive a report: stability deltas, overhead, risk notes, and deployment options.

Disclaimer: We prioritize evidence-first pilots and publish methodology before marketing claims.

coreXploit

Contact

Newsletter

info@corexploit.com

(+57) 313 449 7013

© 2024. All rights reserved.